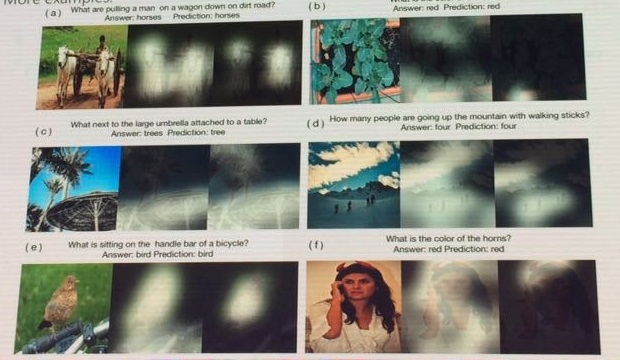

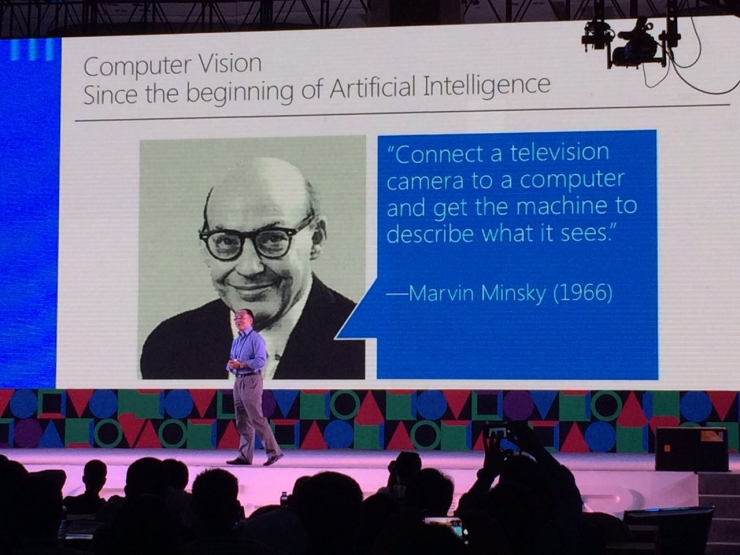

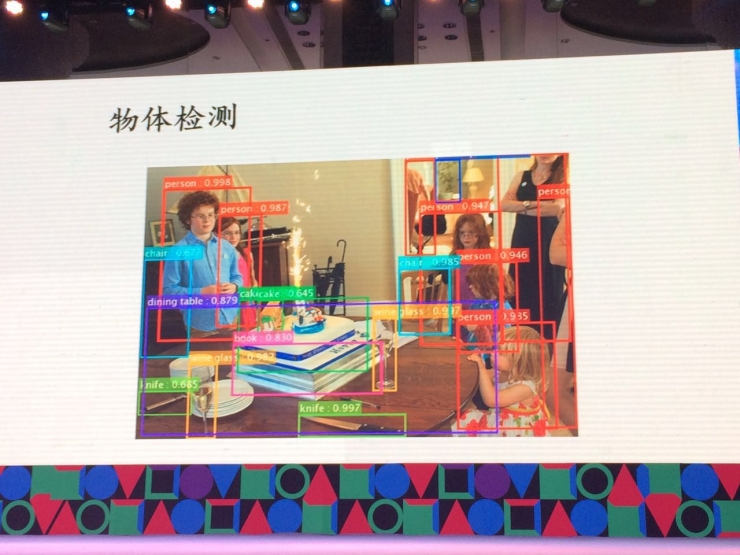

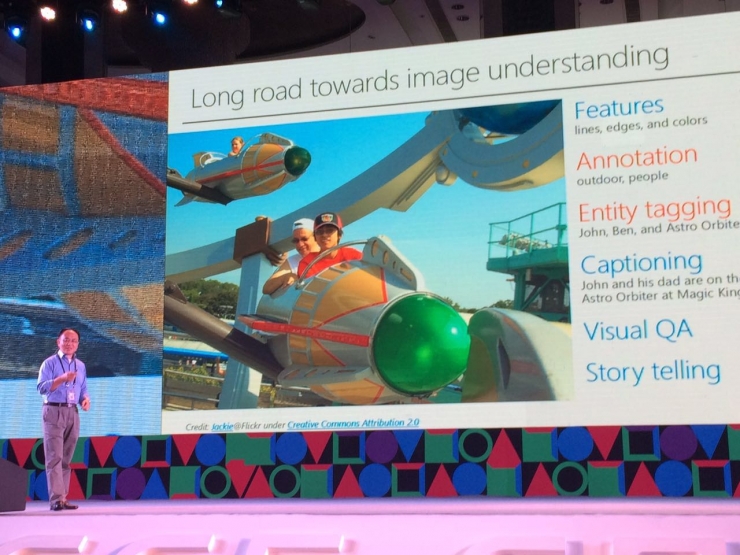

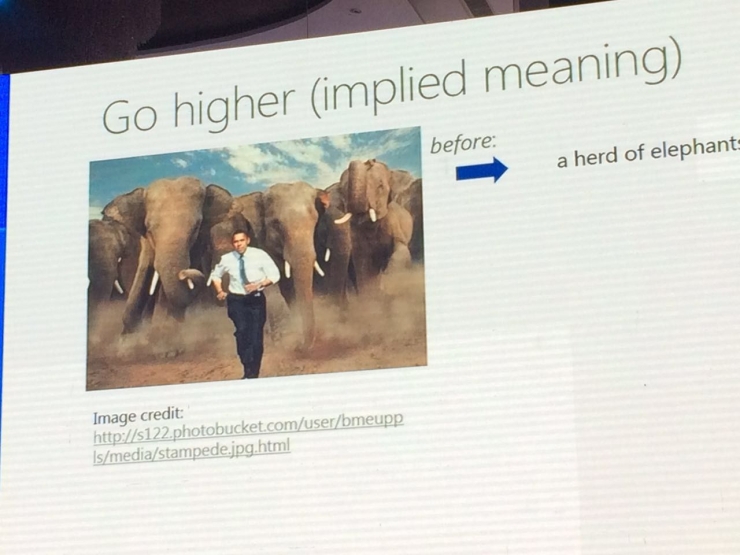

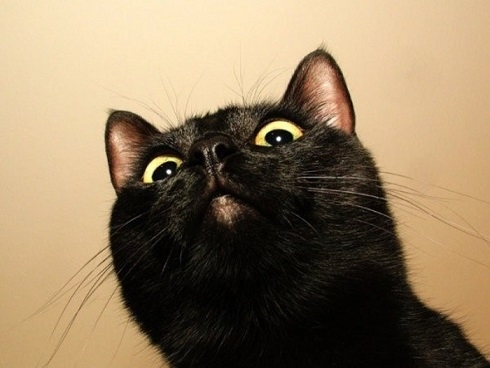

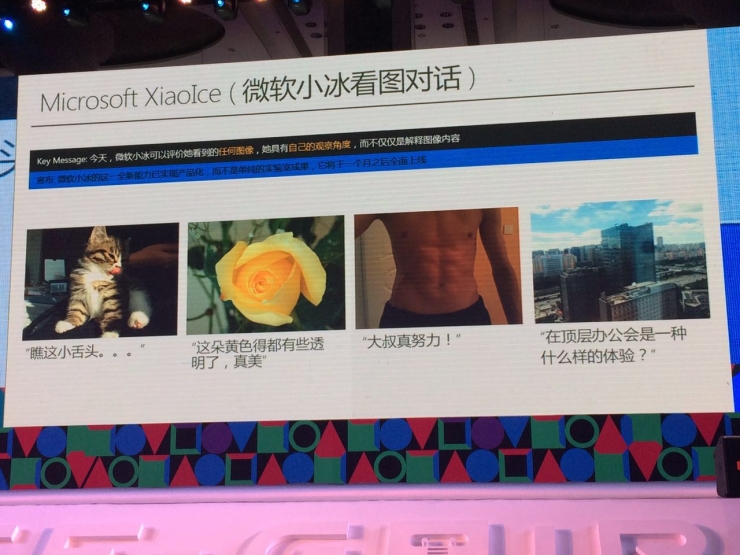

60 years ago, computer vision was first proposed. This important branch of artificial intelligence has gone through a sub-subject today. This history covers almost the entire history of computers and can be described as ups and downs. Those who are familiar with the history of artificial intelligence will understand that the science that now seems to be bombarded has actually gone through three things. In the words of Yong Yong, executive vice president of Microsoft Asia Research Institute, it is said that in the 1990s, students studying machine learning were hard to find even their work. Xi Yong, executive vice president of Microsoft Research Asia, with background in the father of artificial intelligence: Marvin Minsky At today's CCF-GAIR global artificial intelligence and robotics summit, Yong bravely described the development of computer vision in his eyes: a long march. People can see pictures, and computers can only see "0" and "1". Yong Yong summed up the difficulties of machine vision in one sentence. Even if it is difficult, but we slowly let the machine "open his eyes." These achievements are of advanced form: When people originally tried to recognize images with a machine, they could only analyze the pixels on the image. The researchers then realized that not all pixels in the picture are "equal," but that some pixels are more important than others. From this point of view, these pixels are divided into "line, corner, color" and other categories. So imitating human perception of the picture, the machine began to try to understand the basics of a picture by starting with the most basic "features" of line, grayscale, and color. After many years of technological accumulation, computer technology has a good grasp of the basic features of a picture, so scientists tried to let the machine classify pictures. This classification also goes through a shallower phase. Take a picture of a puppy for example. First of all basic classification, let the machine learn to judge whether there is a dog in the picture; Second, the location detection, so that the machine can accurately identify the puppy's position in the picture; Then there is the pixel-level classification. The ideal state is to distinguish whether a particular pixel in a picture belongs to a dog or a TV in the background. Yong Yong said that after the deep learning technology was introduced into image recognition in 2013, the recognition error rate dropped significantly. The current technology is relatively mature. For example, from hundreds of dogs, the type corresponding to the target can be accurately selected (this level has exceeded humanity). Even in some complex pictures, only half of the arm is exposed and it can be successfully identified as a person. Yong Yong told the audience that the “map searching†function that we generally use is not his “comprehension picture†in his mind and can only be regarded as a near-image search. To really understand the picture, we must understand the meaning of the picture. For example, the following picture: If the computer can describe this picture in natural language: A kid and his dad play at Disneyland. This is understanding. In fact, artificial intelligence scientists have now done this. Further, machine vision can realize the recognition of celebrities in the world and can make a description: “Peng Mama and Michelle take a group photo at the Forbidden Cityâ€. Peng Liyuan and Michelle take a photo in the Forbidden City Yong Yong said that currently Microsoft's technology can achieve the world's top 500,000 celebrity face recognition. In the brave eyes, most of our computer vision now stays at the "perceived" level, while the next possible goal is "cognition." For cognition, it is not only a superficial description, but the hidden meaning and cultural significance of the picture. He described for us the four peaks waiting to climb. Let's take a look at the following picture: In the past, this picture may have been described as: A man is chased like an elephant. Now, with face data, this picture can be described as: Obama was chased by a group of elephants. However, children's shoes that have an understanding of American politics have seen more than just a cloudless picture. Since they are commonly used by the Republican Party in the United States, they should see: "On the eve of the U.S. election, Obama was chased by a group of Republican candidates." The meaning derived from this logical chain is the implicit meaning of this image. In the future, artificial intelligence may solve this problem. For computer vision, recognizing the meaning of an image in a video is much more difficult than recognizing a frame of a picture. Processing video requires a unified calculation and identification of the links between each frame. However, Yong Yong stated that there are already some models that have taken a different perspective to solve this problem. In the near future, computers should be able to describe a video by a piece of text. Pixel-level recognition of objects in video can now be achieved Now we use chat bots, such as Microsoft Ice, to conduct simple chat conversations. But it cannot be as human as it can achieve "mutual bombardment" of expression packs. In the future, it is likely that artificial intelligence robots can "read" your emoticons. For example, you see Xiao Bing to see this picture: You definitely don't expect it to reply to you: "This is a cat." You may want it to reply: "Stay down!" There are some "normal" replies, and he makes you feel like you're talking to another person with affection, not a machine. In human conversation, it often involves asking questions about the specific situation of the picture. For example in the picture below: For the one in the upper left corner, you can ask the computer: "The horse is the horse that pulls on the muddy ground?" The answer is: "Ma." This is a very simple judgment for people, but for computers, it has to go through many steps: What is land? What is a muddy land? Where is the ground? What is a car? What is pull? Through layer-by-layer screening, the final computer will give a mask like a heat map, fraught with the range of answers it considers. The object in this range is the answer. Yong Yong told Lei Feng Network (search "Lei Feng Net" public concern) , although Microsoft has now achieved thousands of commonly used objects picture quiz. However, there are still many objects that cannot be identified. This technology still has a lot of room for improvement. In addition, how to understand and tell the story behind it through a picture is also the research direction of future image recognition. In 2016, great progress has been made in deep learning and image recognition. However, Yong Yong said that this is certainly not a "victory of the Long March." These advances are like the "Zunyi Conference" on the Long March. They have experienced a major turning point, but they still have a long way to go. Yong Yong said that in the future, there are three conditions that can guarantee the success of the Long March: 1, the development of machine learning algorithm itself. Artificial intelligence can be from the trough of the 1990s to the current high tide. Good algorithms can be described as invaluable. Continuously improved algorithms can also increase the image recognition rate of computer vision. 2, vertical field experts. The development of computer vision, in fact, not only computer scientists, but also rely on the cooperation of experts in other areas, in the vertical field to produce results. For example, cooperation with the financial sector can better predict the stock market; cooperation with the medical field can be used to invent more precise treatment methods; and cooperation with botanists can realize the mere identification of the types and habits of plants. 3, big data. Big data is the food for machine learning. If there is sufficient and high-quality big data, machine intelligence can make a huge leap forward. It seems that we do not lack these conditions. Qi Yong is full of confidence in the success of "arriving in northern Shaanxi." Lcd Tonch Screen For Iphone,Lcd Display For Iphone,Lcd Touch Screen,Mobile Lcd For Iphone Shenzhen Xiangying touch photoelectric co., ltd. , https://www.starstp.com

1, feature extraction

2, picture classification

3, understand the picture

1, implicit meaning

2, video recognition

3, interactive chat

4, specific questions for the picture